The udev I suggested will be ONLY applied if you put your thumb drive into the usb slot and it gets automatically mounted. That does udisks for you.

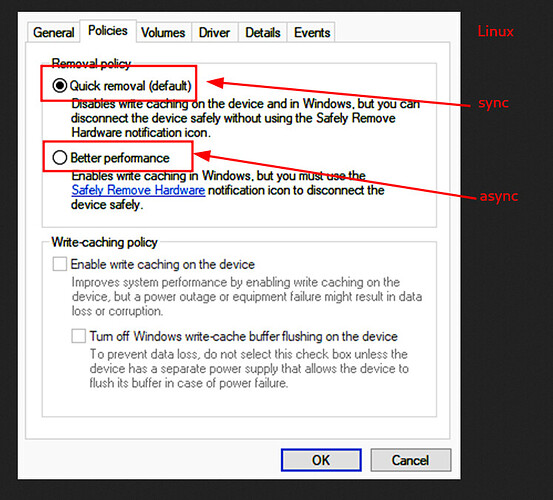

If you mount the usb drive with fstab, the default async will be used if sync is not explicitly called.

Nothing gets overwritten. There is fstab and there is udisks. Fstab is for static mounts and udisks mounts it automatically.

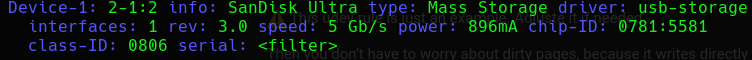

It is reliable. USB3 has theoretically bandwidth of 5Gb/s. Note: it is the hub. If you put 2 usb drives into the same hub it will divided. However… also the speed of the drive comes into play.

No, it is not. When using async the speed is ALWAYS not the real speed. So you have to decide yourself: Do you want that it is written just in time and unplug the thumb drive or do you want to use the cache. If the drive is always connected, then it would not make any difference, but if you need to use it just for a short time, then option sync is always preferable.

So end of story for me. You can do the hacky stuff with dirty pages, but the normal way is just using a mount option as I suggested.

I must adimit that I was wrong here when I see the results of my tiny test below.

Additionally here a simple test from my side:

100MB tempfile on exfat with async

$ mount -t exfat | cut -d" " -f1,5,6 | sed -r "s;\(.+,(|sync).+\);\\1;g"

/dev/sdf1 exfat

$ for x in $(seq 1 5); do export TIMEFORMAT='%3lR'; echo "$(time $(cp ~/tempfile /run/media/user/Ventoy && sync))" && rm -f /run/media/user/Ventoy/tempfile && sync && sleep 1 ; done

Results:

0m6,971s

0m6,837s

0m10,505s

0m8,418s

0m10,597s

Apply udev rule:

echo 'SUBSYSTEMS=="usb", SUBSYSTEM=="block", ENV{ID_FS_USAGE}=="filesystem", ENV{UDISKS_MOUNT_OPTIONS_DEFAULTS}+="sync", ENV{UDISKS_MOUNT_OPTIONS_ALLOW}+="sync"' | sudo tee /etc/udev/rules.d/99-usbsync.rules && sudo udevadm control --reload

Unplug and plugin the flash drive.

100MB tempfile on extfat with sync

$ mount -t exfat | cut -d" " -f1,5,6 | sed -r "s;\(.+,(|sync),.+\);\\1;g"

/dev/sdf1 exfat sync

for x in $(seq 1 5); do export TIMEFORMAT='%3lR'; echo "$(time $(cp ~/tempfile /run/media/user/Ventoy && sync))" && rm -f /run/media/user/Ventoy/tempfile && sync && sleep 1 ; done

Results:

0m45,387s

0m58,523s

0m59,260s

1m4,165s

1m2,894s

100MB tempfile on vfat with async

sudo rm -f /etc/udev/rules.d/99-usbsync.rules && sudo udevadm control --reload

Unplug and plugin the flash drive.

$ mount -t vfat | grep -v sda1 | cut -d" " -f1,5,6

/dev/sdf1 vfat (rw,nosuid,nodev,relatime,uid=1000,gid=1000,fmask=0022,dmask=0022,codepage=437,iocharset=ascii,shortname=mixed,showexec,utf8,flush,errors=remount-ro,uhelper=udisks2)

$ for x in $(seq 1 5); do export TIMEFORMAT='%3lR'; echo "$(time $(cp ~/tempfile /run/media/user/USB8GB/tempfile && sync))" && rm -f /run/media/user/USB8GB/tempfile && sync && sleep 1 ; done

Results:

0m20,836s

0m17,401s

0m9,607s

0m7,731s

0m9,598s

100MB tempfile on vfat with sync

$ mount -t vfat | grep -v sda1 | cut -d" " -f1,5,6

/dev/sdf1 vfat (rw,nosuid,nodev,relatime,sync,uid=1000,gid=1000,fmask=0022,dmask=0022,codepage=437,iocharset=ascii,shortname=mixed,showexec,utf8,flush,errors=remount-ro,uhelper=udisks2)

$ for x in $(seq 1 5); do export TIMEFORMAT='%3lR'; echo "$(time $(cp ~/tempfile /run/media/user/USB8GB/tempfile && sync))" && rm -f /run/media/user/USB8GB/tempfile && sync && sleep 1 ; done

Results:

20m50,983s

16m11,129s

16m15,609s

16m18,828s

16m22,699s

100MB tempfile on ext2 with async

$ mount -t ext2 | cut -d" " -f1,5,6

/dev/sdf1 ext2 (rw,nosuid,nodev,relatime,errors=remount-ro,uhelper=udisks2)

$ for x in $(seq 1 5); do export TIMEFORMAT='%3lR'; echo "$(time $(cp ~/tempfile /run/media/user/USB8GB/tempfile && sync))" && rm -f /run/media/user/USB8GB/tempfile && sync && sleep 1 ;done

0m13,881s

0m9,270s

0m8,120s

0m8,574s

0m7,631s

100MB tempfile on ext2 with sync

$ mount -t ext2 | cut -d" " -f1,5,6

/dev/sdf1 ext2 (rw,nosuid,nodev,relatime,sync,errors=remount-ro,uhelper=udisks2)

$ for x in $(seq 1 5); do export TIMEFORMAT='%3lR'; echo "$(time $(cp ~/tempfile /run/media/user/USB8GB/tempfile && sync))" && rm -f /run/media/user/USB8GB/tempfile && sync && sleep 1 ;done

4m38,701s

3m58,476s

3m55,660s

4m5,261s

4m1,574s

What is my conclusion here? There is indeed a performance impact, but it depends on the file system aswell. Maybe on vfat the options flush and sync together have a negative effect here. exfat has the best results in sync and async mode (maybe f2fs will have similar results because it is also optimized for flash drives, but it is exotic on thumb drives). All of them have similar results (more or less), therefore no doubt: async is superior even if you don’t adjust the dirty pages.

The times also contains also the forced sync time after the copying.

The times also contains also the forced sync time after the copying.

I am against using the sync option after seeing these real life results. Myself I will not promote that anymore, but adjusting dirty pages is also not ideal (at least for me), So I hope the kernel devs will find a solution, but in the while I can be aware of (as I have always been) and doing a sync command after copying something to the thumb drive.